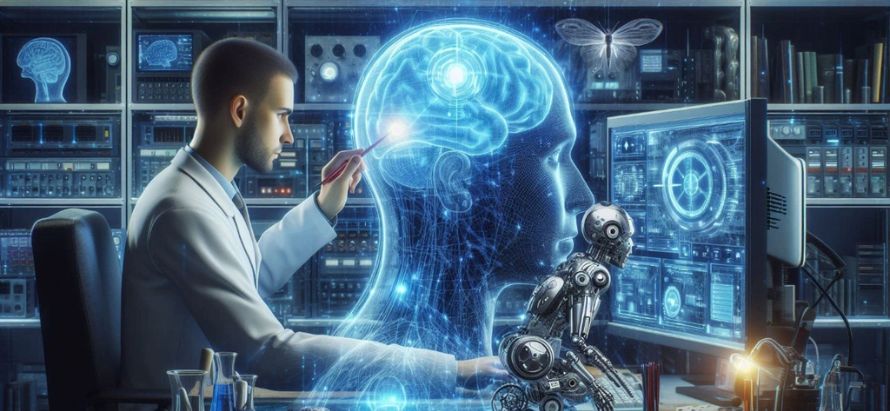

Scientists have developed a non-invasive AI system using fMRI data to capture brain activity and convert it into descriptive, coherent text. The technique called mind-captioning decodes what people see or imagine into narrative sentences, bypassing traditional language centers in the brain.

Glimpse:

A new AI breakthrough called mind-captioning can translate your inner scenes into descriptive text. By using fMRI scans, the system captures visual and semantic brain activity and maps it through a language model to generate sentences that reflect what you’re thinking or remembering. Unlike earlier brain-to-text methods, this approach doesn’t rely on language areas making it potentially powerful for nonverbal communication.

Researchers have unveiled a groundbreaking technique known as mind-captioning, which uses non-invasive brain imaging (fMRI) to decode a person’s visual or imagined thoughts into fluent, structured text. The approach builds on deep language models to interpret semantic features from brain scans and generate descriptive sentences that mirror what participants are seeing or recalling.

Participants in the study watched naturalistic video clips rich in detail while their brain activity was recorded. The AI model then matched this activity to semantic features generated by a language model. Through iterative optimization swapping words, adjusting phrases the system produced captions that closely aligned with participants’ actual mental images. Impressively, it worked even when brain regions traditionally associated with language were not used, suggesting that meaning is distributed more broadly in the brain.

This technique also generalized to recalled content: when participants imagined the video clips, the system could still generate coherent descriptions, indicating the method’s robustness. The potential applications are profound from helping individuals who struggle to communicate like those with aphasia to creating new brain-machine interfaces that interpret thought directly.

However, the technology raises serious ethical questions. Decoding someone’s thoughts even non-verbally touches on privacy and consent. As AI gets closer to “reading the mind,” researchers are calling for tight guardrails to protect individual autonomy.

“It’s possible to generate coherent, meaningful text from brain activity not by decoding language itself, but by interpreting the nonverbal representations a new window into how the brain transforms experience into expression.”

By

HB Team